Observations¶

The Observation class, is the container for the data acquired by the

telescope during a scaning period (and the relevant information about it).

Serial applications¶

In a serial code Observation is equivalent to an empty class in which you

can put anything:

import litebird_sim as lbs

import numpy as np

obs = lbs.Observation(

detectors=2,

start_time_global=0.0,

sampling_rate_hz=5.0,

n_samples_global=5,

)

obs.my_new_attr = 'value'

setattr(obs, 'another_new_attr', 'another value')

Across the framework, the coherence in the names and content of the attributes

is guaranteed by convention (no check is done by the Observation

class).

There are two more conventions. First, obs.tod is a 2-D array.

obs.tod[0] is the time stream of the first detector.

obs.tod[:, 0] are the first time sample of all the detectors.

Second, detector-specific attributes are collected in arrays:

obs.calibration_factors is a 1-D array with one entry per

detector.

Thus, typical operations look like this:

# Collect detector properties in arrays

obs.calibration_factors = np.array([1.1, 1.2])

obs.wn_levels = np.array([2.1, 2.2])

# Apply to each detector its own calibration factor

obs.tod *= obs.calibration_factors[:, None]

# Add white noise at a different level for each detector

obs.tod += (np.random.normal(size=obs.tod.shape)

* obs.wn_levels[:, None])

Parallel applications¶

The only work that the Observation class actually does is handling

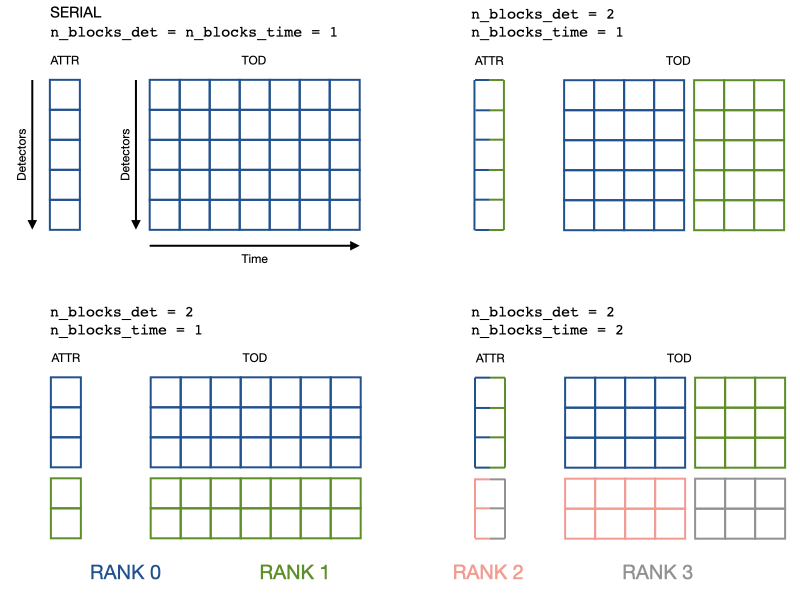

parallelism. obs.tod can be distributed over a

n_blocks_det by n_blocks_time grid of MPI ranks. The blocks can be

changed at run-time.

The coherence between the serial and parallel operations is achieved by

distributing also the arrays of detector properties:

each rank keeps in memory only an interval of calibration_factors or

wn_levels, the same detector interval of tod.

The main advantage is that the example operations in the Serial section are achieved with the same lines of code. The price to pay is that you have to set detector properties with special methods.

import litebird_sim as lbs

from mpi4py import MPI

comm = MPI.COMM_WORLD

obs = lbs.Observation(

detectors=2,

start_time_global=0.0,

sampling_rate_hz=5.0,

n_samples_global=5,

n_blocks_det=2, # Split the detector axis in 2

comm=comm # across the processes of this communicator

)

# Add detector properties either with a global array that contains

# all the detectors (which will be scattered across the processor grid)

obs.setattr_det_global('calibration_factors', np.array([1.1, 1.2]))

# Or with the local array, if somehow you have it already

if comm.rank == 0:

wn_level_local = np.array([2.1])

elif comm.rank == 1:

wn_level_local = np.array([2.2])

else:

wn_level_local = np.array([])

obs.setattr_det('wn_levels', wn_level_local)

# Operate on the local portion of the data just like in serial code

# Apply to each detector its own calibration factor

obs.tod *= obs.calibration_factors[:, None]

# Add white noise at a different level for each detector

obs.tod += (np.random.normal(size=obs.tod.shape)

* obs.wn_levels[:, None])

# Change the data distribution

obs.set_blocks(n_blocks_det=1, n_blocks_time=1)

# Now the rank 0 has exactly the data of the serial obs object

For clarity, here is a visualization of how data (a detector attribute and the TOD) gets distributed.

When n_blocks_det != 1, keep in mind that obs.tod[0] or

obs.wn_levels[0] are quantities of the first local detector, not global.

This should not be a problem as the only thing that matters is that the two

quantities refer to the same detector. If you need the global detector index,

you can get it with obs.det_idx[0], which is created

at construction time.

Other notable functionalities¶

The starting time can be represented either as floating-point values (appropriate in 99% of the cases) or MJD; in the latter case, it is handled through the AstroPy library.

Instead of adding detector attributes after construction, you can pass a list of dictionaries (one entry for each detectors). One attribute is created for every key.

import litebird_sim as lbs

from astropy.time import Time

# Second case: use MJD to track the time

obs_mjd = lbs.Observation(

detectors=[{"name": "A"}, {"name": "B"}]

start_time_global=Time("2020-02-20", format="iso"),

sampling_rate_hz=5.0,

nsamples_global=5,

)

obs.name == np.array(["A", "B"]) # True

API reference¶

-

class

litebird_sim.observations.Observation(detectors: Union[int, List[dict]], n_samples_global: int, start_time_global: Union[float, astropy.time.core.Time], sampling_rate_hz: float, allocate_tod=True, dtype_tod=<class 'numpy.float32'>, n_blocks_det=1, n_blocks_time=1, comm=None, root=0)¶ Bases:

objectAn observation made by one or multiple detectors over some time window

After construction at least the following attributes are available -

start_time()-n_detectors()-n_samples()-tod()2D array (n_detectors by n_samples) stacking the timesstreams of the detectors

A note for MPI-parallel application: unless specified, all the variables are local. Should you need the global counterparts, 1) think twice, 2) append _global to the attribute name. -

start_time_global()-n_detectors_global()~ n_detectors * n_blocks_det -n_samples_global()~ n_samples * n_blocks_timeFollowing the same phylosophy also -

get_times()returns the time stamps of the local time interval- Parameters

detectors (int/list of dict) – Either the number of detectors or a list of dictionaries with one entry for each detector. The keys of the dictionaries will become attributes of the observation. If a detector is missing a key it will be set to

nan. If an MPI communicator is passed tocomm,detectorsis relevant only for therootprocessn_samples_global (int) – The number of samples in this observation.

start_time_global – Start time of the observation. It can either be a astropy.time.Time type or a floating-point number. In the latter case, it must be expressed in seconds.

sampling_rate_hz (float) – The sampling frequency, in Hertz.

dtype_tod (dtype) – Data type of the TOD array. Use it to balance numerical precision and memory consumption.

n_blocks_det (int) – divide the detector axis of the tod (and all the arrays of detector attributes) in n_blocks_det blocks

n_blocks_time (int) – divide the time axis of the tod in n_blocks_time blocks

comm – either None (do not use MPI) or a MPI communicator object, like mpi4py.MPI.COMM_WORLD. Its size is required to be at least n_blocks_det times n_blocks_time

root (int) – rank of the process receiving the detector list, if

detectorsis a list of dictionaries, otherwise it is ignored.

-

get_det2ecl_quaternions(spin2ecliptic_quats: litebird_sim.scanning.Spin2EclipticQuaternions, detector_quats, bore2spin_quat, quaternion_buffer=None, dtype=<class 'numpy.float64'>)¶ Deprecated: see scanning.get_det2ecl_quaternions

-

get_ecl2det_quaternions(spin2ecliptic_quats: litebird_sim.scanning.Spin2EclipticQuaternions, detector_quats, bore2spin_quat, quaternion_buffer=None, dtype=<class 'numpy.float64'>)¶ Deprecated: see scanning.get_ecl2det_quaternions

-

get_pointing_buffer_shape()¶ Deprecated: see scanning.get_pointing_buffer_shape

-

get_pointings(spin2ecliptic_quats: litebird_sim.scanning.Spin2EclipticQuaternions, detector_quats, bore2spin_quat, quaternion_buffer=None, dtype_quaternion=<class 'numpy.float64'>, pointing_buffer=None, dtype_pointing=<class 'numpy.float32'>)¶ Deprecated: see scanning.get_pointings

-

get_quaternion_buffer_shape(num_of_detectors=None)¶ Deprecated: see scanning.get_quaternion_buffer_shape

-

get_times(normalize=False, astropy_times=False)¶ Return a vector containing the time of each sample in the observation

The measure unit of the result depends on the value of astropy_times: if it’s true, times are returned as astropy.time.Time objects, which can be converted to several units (MJD, seconds, etc.); if astropy_times is false (the default), times are expressed in seconds. In the latter case, you should interpret these times as sidereal.

If normalize=True, then the first time is zero. Setting this flag requires that astropy_times=False.

This can be a costly operation; you should cache this result if you plan to use it in your code, instead of calling this method over and over again.

Note for MPI-parallel codes: the times returned are only those of the local portion of the data. This means that

the size of the returned array is

n_samples, smaller thann_samples_globalwhenever there is more than one time-blockself.tod * self.get_times()is a meaningless but always allowed operation

-

property

n_blocks_det¶

-

property

n_blocks_time¶

-

property

n_detectors¶

-

property

n_detectors_global¶ Total number of detectors in the observation

If you need the number of detectors in the local TOD block

self.tod, use eithern_detectorsorself.tod.shape[0].

-

property

n_samples_global¶ Samples in the whole observation

If you need the time-lenght of the local TOD block

self.tod, use eithern_samplesorself.tod.shape[1].

-

property

sampling_rate_hz¶

-

set_n_blocks(n_blocks_det=1, n_blocks_time=1)¶ Change the number of blocks

- Parameters

n_blocks_det (int) – new number of blocks in the detector direction

n_blocks_time (int) – new number of blocks in the time direction

-

setattr_det(name, info)¶ Add a piece of information about the detectors

Store

infoas the attributenameof the observation. The difference with respect toself.name = info, relevant only in MPI programs, areinfois assumed to contain a number of elements equal toself.tod.shape[0]and to have the same order (info[i]is a property ofself.tod[i])When changing

n_blocks_det,set_n_blocks()is aware ofnameand will redistributeinfoin such a way thatself.name[i]is a property ofself.tod[i]in the new block distribution

- Parameters

name (str) – Name of the detector information

info (array) – Information to be stored in the attribute

name. The array must contain one entry for each local detector.

-

setattr_det_global(name, info, root=0)¶ Add a piece of information on the detectors

Variant of

setattr_det()to be used when the information comes from a single MPI rank (root). In particular,In the

rootprocess,infois required to haven_detectors_globalelements (notself.tod.shape[1]). For other processes info is irrelevantinfois scattered from therootrank to the relevant processes inself.commso thatself.namewill haveself.tod.shape[0]elements on all the processes andself.name[i]is a property ofself.tod[i]When changing

n_blocks_det,set_n_blocks()is aware ofnameand will redistributeinfoin such a way thatself.name[i]is a property ofself.tod[i]in the new block distribution

- Parameters

name (str) – Name of the information

info (array) – Array containing

n_detectors_globalentries. Relevant only for thrrootprocessroot (int) – Rank of the root process